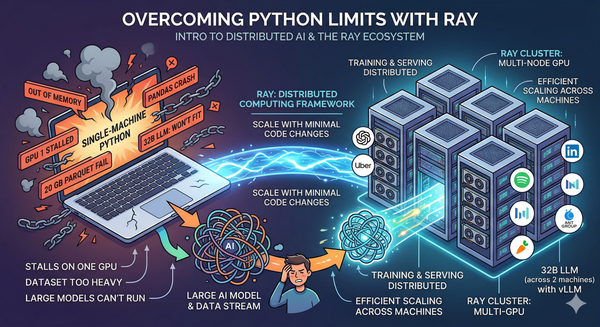

Ray for AI Teams: Distributed Computing, Model Serving, and Multi-GPU Inference

A hands-on walkthrough of Ray's full stack - ending with a real deployment that splits a big model across two nodes. As AI models grow larger and datasets grow heavier, single-machine Python hits its limits fast. Training stalls on one GPU while others sit idle. Pandas crashes on